Design for Security – Why Proper Architecture Matters to ICS Security

I wanted to take some time today and share with you my thoughts on fundamental ICS (cyber)security.

With all these shiny new and valuable OT aimed security products being in the past few years, it might be tempting to start thinking that securing your industrial environment will be achievable with a single product or solution released (especially with the way some of these products are promoted). However, addressing all your industrial security woes, securing an ICS environment cannot and never will be as simple as installing a single product. It's a never-ending battle in an ever-changing landscape. If you want to be successful at securing your ICS, you need to start from the bottom up with a security-minded and security friendly design of the ICS network architecture. Once a solid foundation is built you can start adding on bells and whistles, much like it is useless to hang your flower boxes on your newly build home if the struts your trying to hang them off, cannot support them (or are not there).

Before I elaborate on this, let's quickly go over the history of the ICS environment and look at how things evolved into the converged mesh (or mess) of devices and systems we operate today.

A little history lesson in how things came to be

When PLCs first started replacing the racks of relays that used to run the automation process, they weren't much more than that, a replacement for the racks of relays. A very compact and sophisticated replacement for relays though, that allowed to make changes without having to rewire half your plant and only took up a fraction of the control room space. The first PLC implementations were standalone islands of automation where you needed specialized "programming terminals" to connect to the PLC and make changes.

The popularity of the PLC quickly grew, and vendors started adding convenience in terms of remote IO, universal programming stations, HMIs and inter PLC communications. To achieve these new features automation companies started developing communication protocols and media, most based on some form of serial communications and all of them proprietary and different from one another. Some early industrial protocols are Modbus, ICCP, DNP and CIP.

As these proprietary communication protocols were initially designed for short distance point to point communications, they didn't incorporate security measures such as authentication, authorization, or encryption. Why would you need that on a single wire between two devices where only those two devices can talk? The inherent ICS security problems started evolving when these early protocols started to be used for communications between multiple devices, over longer distances. Even though the media the protocols ran on changed in some cases, to allow for the longer distances, the protocol itself, the way commands and instructions are communicated remained the same (for backwards compatibility).

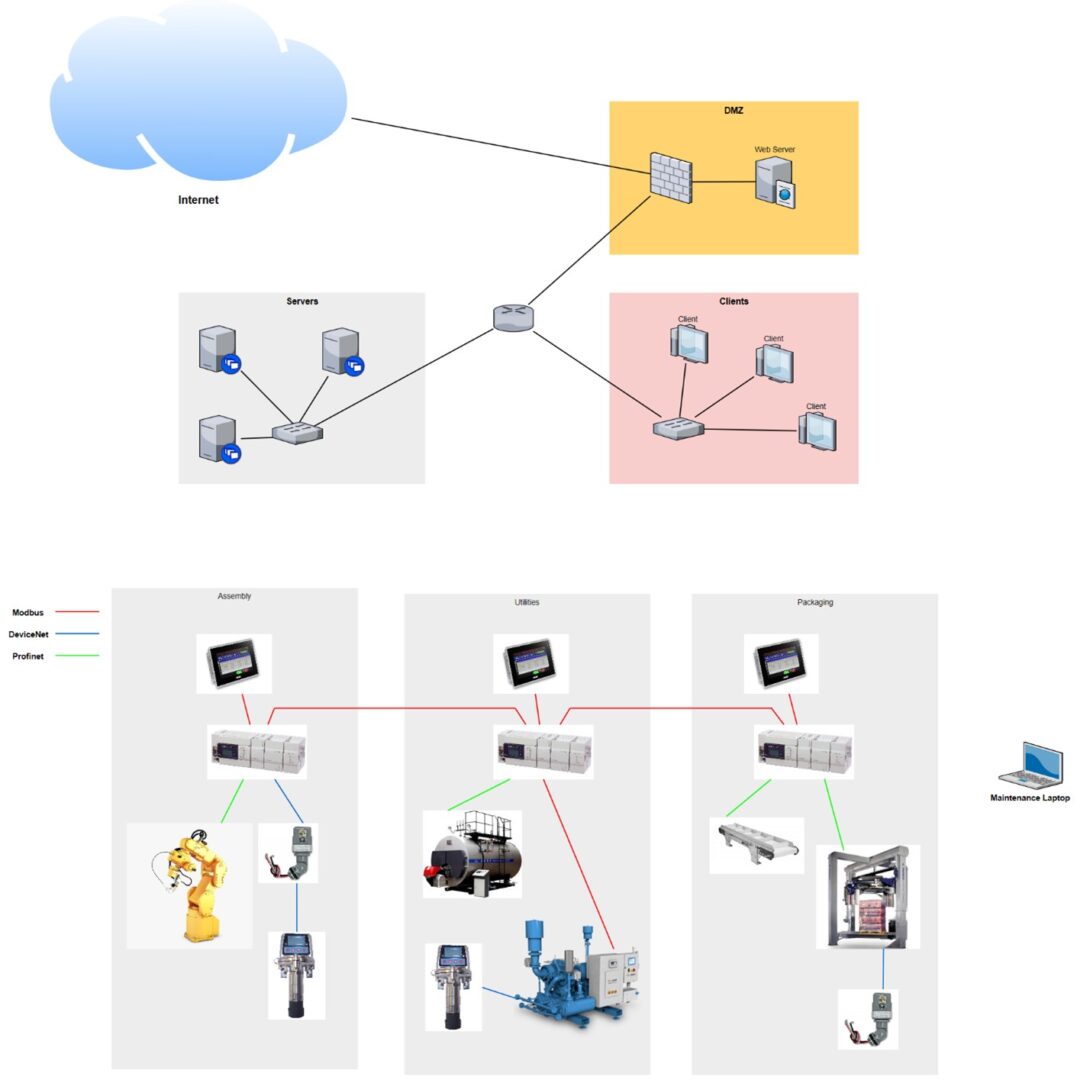

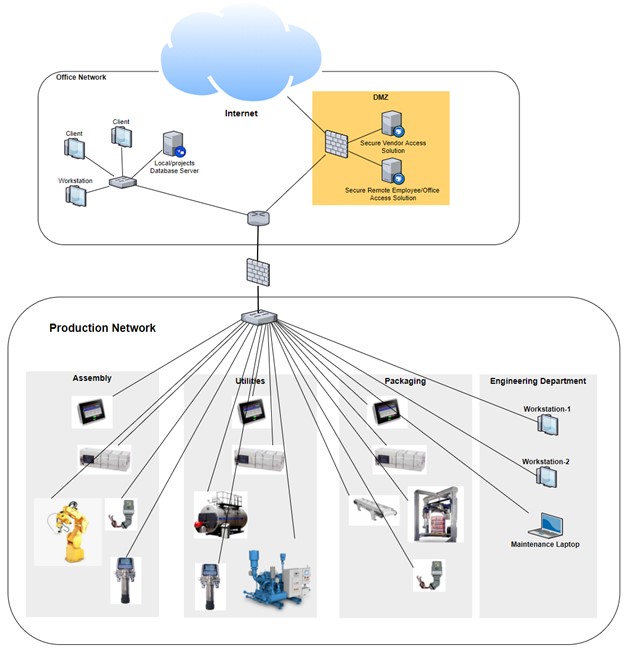

So here we are, early to mid-90s, with automation and control systems evolved into complex mazes interconnected systems, devices and other equipment, running on proprietary communication media, talking proprietary but inherently insecure protocols. And life was good, at least from an ICS security perspective. Even though the traffic between all these devices was wide open, plain-text and ripe for manipulation and attack, there were very few attackers that could make it onto those communication networks. It had to be someone inside the ICS facility with the equipment and the knowledge to be able to start messing with stuff. And all along regular networking technology and information technology (IT) had evolved into where the business side of the manufacturing facility was now completely converged onto Ethernet, some sites had even tossed their hubs and installed switches. So, by the end of the 90's a typical manufacturing plant would look like this, from a business network versus ICS environment perspective:

From a cybersecurity perspective this architecture is very desirable. You would need the maintenance laptop to be infected with a Remote Access Trojan (RAT) or in some other way remotely accessibly, while connected to the ICS equipment before this setup can be remotely compromised. So pretty ideal right? Well as with all good things, this didn't last. The biggest drawback of the setup shown here is the variety of technologies, with every vendor bringing their own type of communication media and modules, the number and variety of spares needed to keep your facility safely stocked exponentially grows with the amount of ICS equipment you own as well as the technical knowledge a person needed to have to support all this. Not only does every vendor implement their own proprietary stuff, within vendors offerings, things changed as well. This mere fact along with the increased demand of communications bandwidth as well as ease of use and manageability drove the demand for some common denominator in the communications field. As the ICS vendors were not likely to agree on a single protocol, the next best thing was chosen, a common way to transmit commands over a shared media network. Ethernet was chosen for the job. Vendors were able to easily adopt their proprietary protocols to the Ethernet networking standard by simply encapsulating the protocol in an Ethernet frame. They were even able to add routing and session, by incorporating IP and TCP. Things started to look up for the overwhelmed support engineer, who now only needed to know how to deal with IP networking. So, everything slowly moved onto the Ethernet bandwagon, and when I say everything, I mean everything. PLCs, HMIs, Sensors, Actuators, Valve blocks, signal lights, … By the mid-2000s a typical plant would look like this from an ICS and business network perspective:

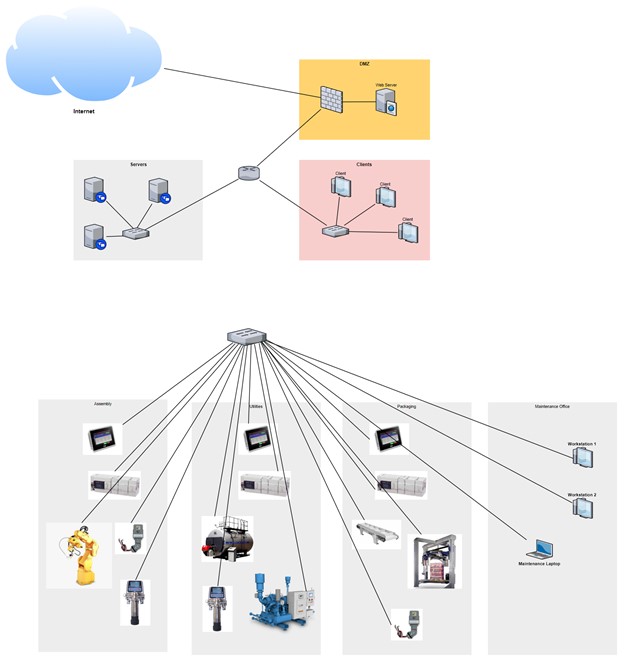

Fantastic! Everything was now connected; anything could talk to anything else and all of it was accessible from the maintenance office or wherever you could hop on the network. The controls engineer in me found this the golden age of automation networking. I remember commissioning a new production line from a rooftop in Los Angeles while connected to the Wireless network we had just installed, what a freedom! But here comes the caveat, that wasn't enough. Engineers wanted to have business email on their engineering workstation, the plant manager wanted on-the-fly and up-to-date production stats on his PDA while sitting at the beach in Tahiti and plant controllers, quality personnel and six sigma black-belts wanted to get uptime numbers, production statistics, counts, and downtime tracking and other "Overall Equipment Effectiveness (OEE)" data. So, what was the most natural thing to do? Why not tie the two separate networks together? They both are Ethernet and run IP and TCP and once connected we can access all the data we need. So, this happened:

Or this, in some cases:

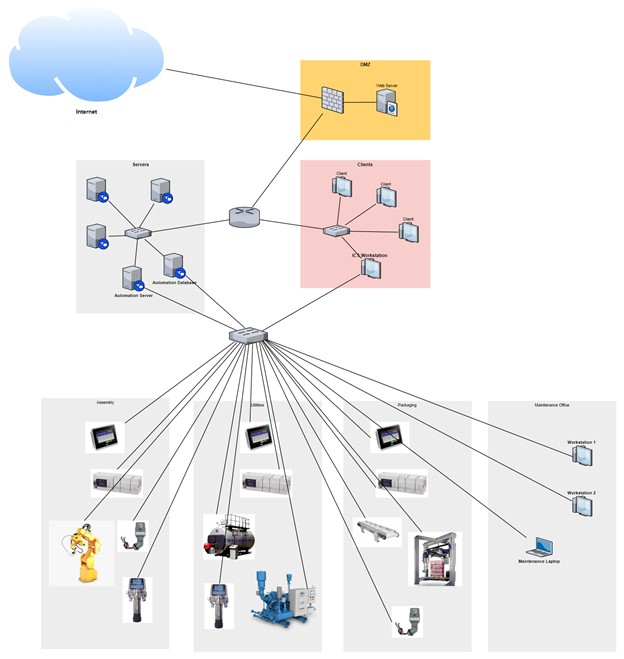

Most of us controls engineers made the mistake to use one of these two "fixes" to the problem. I know I did. But after coming to our senses and forming a bond with our IT department we settled on the following:

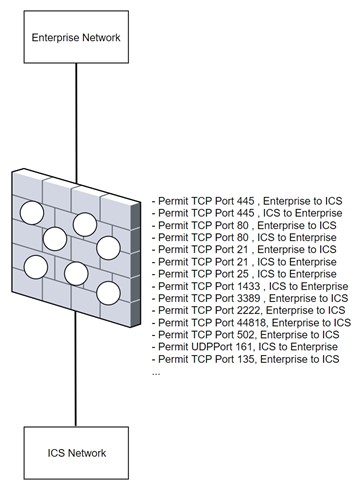

This is a pretty decent compromise and seems to cover the functionality and security requirements we are after, or at least we control engineers think so. The reality is that over time that firewall in the middle there becomes Swiss cheese. We find more and more protocols and features we want to allow through the firewall so before long that firewall will look like:

With all these firewall exceptions in place, is it still a firewall? We now have unfettered access from the enterprise network to the industrial network, and we have industrial protocols with no regard for security leaking from the ICS network to the business side. Now we don't need an attacker to be on premises to attack the ICS system. Any attacker with a foothold into the business network can now do damage. This setup also allows for the pivot attacks we have been seeing more and more often, where individual in a company receive phishing emails with malware attached that once opened allows a remote connection to the individuals computer from anywhere in the world. This access allows the attacker to pivot their attacks and find a way into the ICS network, which with at Swiss cheese firewall in place is a matter of scanning for the right protocols. This is how things evolved over time. I am not trying to generalize every ICS owner but in my experience the bulk of them will have some form or shape of the Swiss cheese architecture in place. I have seen companies implement a way more secure setup and I have also seen some implement way less but typically this is what you find out there.

What follows next is my recommendation on a secure ICS network setup. This comes from 2 decades of trial and error in building industrial networks, as well as being part of the teams that defined the industry recommendations and going out consulting with some of the largest manufacturers on the planet. This is how I would go about building your network if you'd ask me.

It all starts with a solid foundation

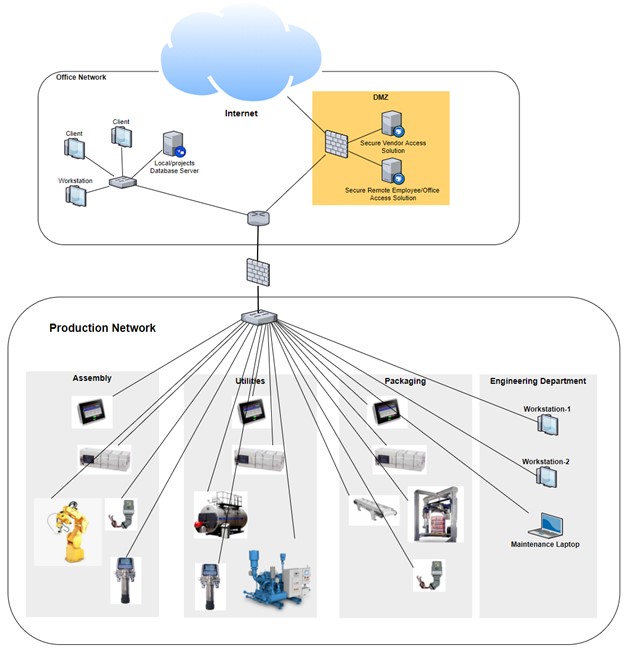

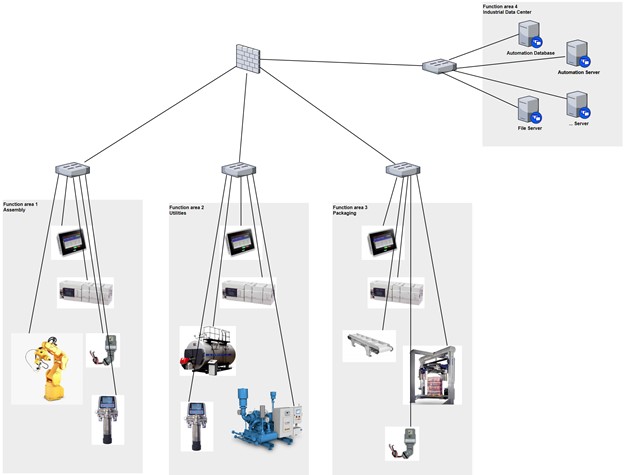

Fundamentally, securing the ICS network is fairly straightforward. You don't have to deal with all these exotic devices like mobile phones and laptops, wireless and BYOD. Well, let me rephrase that, you SHOULD NOT deal with these devices. The first major step towards a secure ICS is to move anything but the systems and devices that are essential to the production process, onto the enterprise or business network. This includes laptops, workstation computers, wireless access points, and so on. This is the first cornerstone of the security foundation that an ICS network should be build upon, segmentation. With segmentation you define an area of the network, dedicated to the ICS process essential equipment only, let's call it the Industrial zone. Within the industrial zone you should further subdivide into functional areas and place related equipment into these functional areas, separated from other functional areas. Ideally this separation is done by physical network equipment separation but using VLANs works too. The idea is that all functional area specific control traffic stays within the functional network area. This is achieved by grouping together any ICS equipment that needs to communicate into their own network subnet. Any traffic that must get out of the functional area to allow, for example communications to a shared resource like the boiler room or to send statistical data out to the Industrial data center (more on this later), we connect the functional areas together through a firewall (a router or layer 3 switch works too but a firewall is ideal). This allows restriction, control and inspection of network traffic.

The result of proper segmentation is a significantly reduces attack surface, even if something malicious were to make it into the industrial zone, it would be contained within the functional area as in the architecture shown above, everything can talk to (attack) everything else.

Added benefits of proper segmentation are performance, by minimizing the network broadcast domain, equipment spends less time listening to other equipment talk, and with segmentation we can create network traffic choke points, spots in the network where we can easily and efficiently capture interesting network traffic. The figure below shows what our example architecture from before will look like if we apply proper segmentation:

Within this segmented architecture we keep all related equipment in their own functional area and network subnet. This helps with security as well as performance. The firewall allows us controlled access between functional areas. The industrial data center is a server or collection of servers that can be used for interactions with the ICS equipment like programming, troubleshooting or data collection. Typically, the industrial data center is a virtualization platform where we can create virtual machines that can run the variety of automation and control platform suites. As we will discuss later, these virtual machines are the only way to communicate to the automation equipment and the only servers the automation equipment can send data to. The virtual machines live in the Industrial zone and access to them is highly controlled and monitored, the topic of the next section

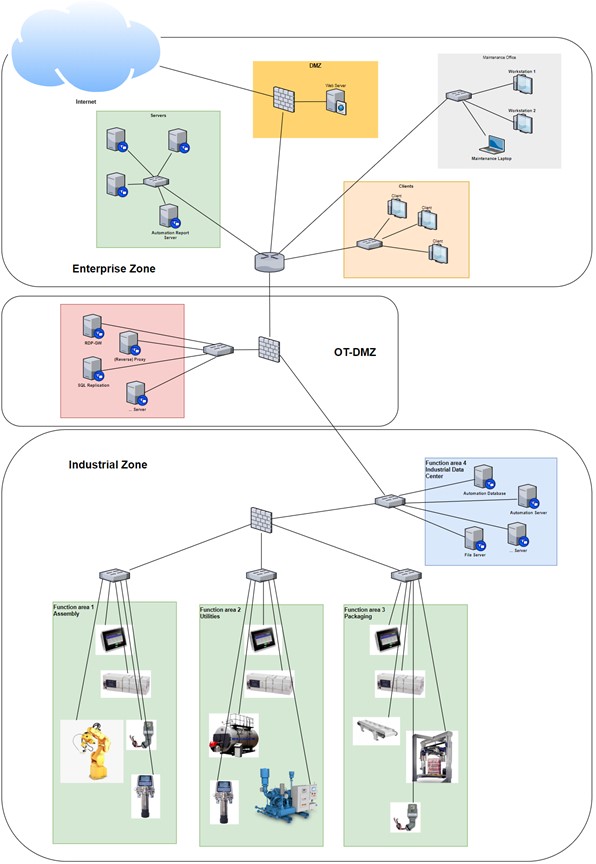

The second cornerstone of the security foundation that we are building the ICS network upon is controlled access. We can all agree that if we could run the ICS completely separated, we would end up with much more secure environment. However, the current trend in the industrial space is to have more and more interactions with the ICS equipment. So how can we provide a secure way of allowing this interaction to take place. If we look at a similar situation on the traditional IT side, where there is a need for internet users of a public facing website to securely retrieve information from systems within the business network. The way traditional IT staff deals with this is by implementing a Demilitarized Zone or DMZ. The principle of a DMZ is creating a middle ground between an organization's trusted internal network and an untrusted, external network such as the Internet. Also called a "perimeter network," the DMZ is a sub-network (subnet) that may sit between firewalls or off one leg of a firewall. Organizations typically place their Web, mail and authentication servers in the DMZ. Interactions from internet users are done via these servers in the DMZ which are basically brokering traffic. If a server gets compromised, the compromise is contained within the DMZ. If we apply the tried and proven IT concept of a DMZ to the ICS network and define the business network as the untrusted network, we end up with the following architecture:

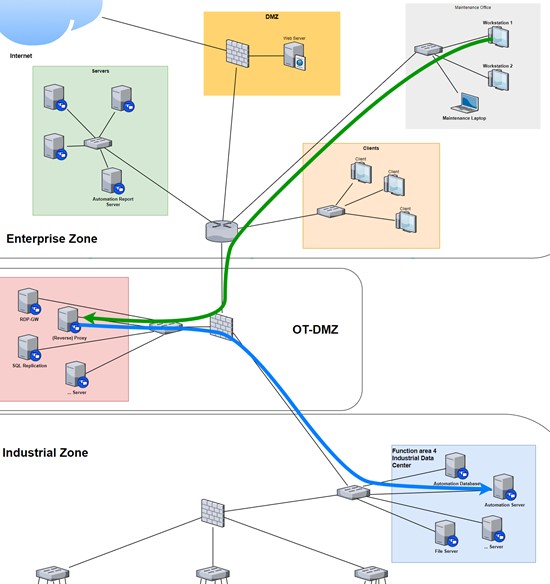

Within this architecture we have all the controls and design in place to properly segment the ICS network (Industrial Zone) from the business network (Enterprise Zone) and provide controlled access between the Enterprise Zone and the Industrial zone and vice versa with the help of DMZ (OT-DMZ). Let's go over two usage examples to illustrate how this architecture would work.

Access to controls software via Remote desktop access to the Industrial Zone.

In this example a workstation in the Maintenance Office wants to use programming software like Siemens TIA or Rockwell Studio 5000 to perform development or troubleshooting activities on the ICS equipment in the industrial zone. The programming software is installed on a "Automation server" VM in the Industrial Datacenter in the industrial zone. The user of workstation 1 would initiate a remote desktop session to the automation server the same way as he would to an endpoint on the Enterprise network, however his RDP client is configured to use the RDP Gateway server in the OT DMZ. In short, the RDP-GW server brokers a remote desktop connection by switching protocols. The connection to the gateway server is over HTTPS (port 443) and the connection from the gateway server to the automation server would be remote desktop protocol (port 3389). The figure below illustrates the connection path:

Note that the targeted Automation server is allowed to access assets in the other functional areas, direct access from the OT-DMZ is prevented. Ways to secure this are by OT-DMZ firewall rules, authentication and authorization (allow Workstation 1 to only connect to the Automation server and nothing else) on the RDP-GW server and by restrictions on the automation server. This setup also allows inspection of network traffic at multiple points. To show the strength of a DMZ, an exploit like BlueKeep (https://www.bleepingcomputer.com/news/security/bluekeep-remote-desktop-exploits-are-coming-patch-now/) would be useless against the RDP service of the Automation server in the Industrial Zone as the only way to get to the Automation Server is via the DMZ RDP-GW which uses the HTTPS protocol on the untrusted side (where the attack would originate from).

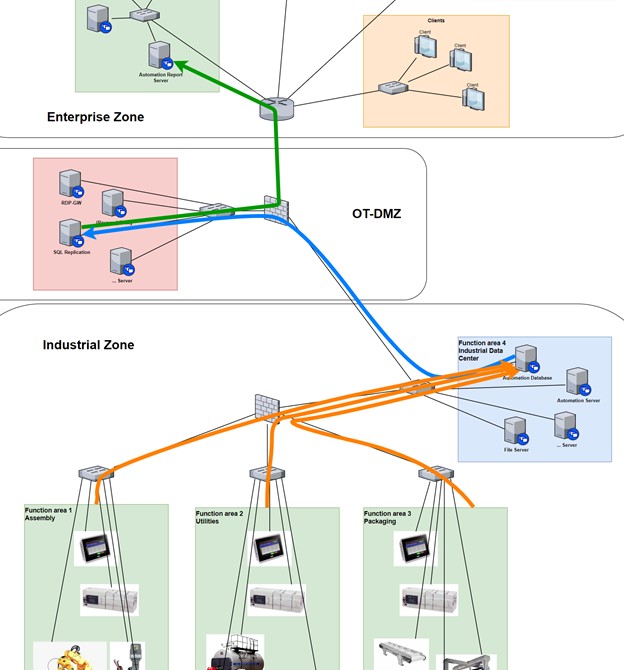

Generating production reports, grabbing production data

The second example is around collecting data from the production floor. The data of interest would be sent from the production floor (the functional areas) to the automation database (Pi) in the Industrial Data Center. The Automation database server would then push or replicate that data with an enterprise database server or to an enterprise report server for automation reports. The data flow is depicted in the figure below:

There are many more examples to explore. As a matter of fact, every unique interaction from the enterprise zone to the industrial zone and vice versa would have to be thought out this way. Adhering to a few fundamental rules will go a long way in making sure the right solution would be chosen for the interaction:

- No direct access between the industrial and enterprise zones should be allowed.

- The OT-DMZ should only communicate to servers in the Industrial Data Center. (not to the production areas)

- Production data and industrial protocols should only be allowed between functional areas. (not to the OT-DMZ)

And there you have it, two simple rules to keep in mind when designing your ICS network:

Segment your network into enterprise and industrial zones and sub divide the industrial zone into functional areas

Control all access into and out of the industrial zone by use of a properly designed DMZ

And now that the foundation is properly laid out, adding those bells and whistles we talked about in the beginning of this article will be easier and more effective. I suggest at this point, as a first logical step after a proper security architecture is build, is to think about adding a passive OT asset management and/or vulnerability detection appliance like the ones Claroty, CyberX or Indegy provide. Those products give you a fairly complete picture of "what you have out there" and "what is wrong/lacking with what you have", two very important areas of concern within a security posture. Another worthwhile addition to add at this point would be a Security Incident and Event Management solution (SIEM) that will help you gather, store and correlate events and logs to give you a convenient way to start monitoring the security posture of your ICS. To implement any of these products would be a breeze now too, as we designed the architecture with security in mind. For example, we designed network traffic choke points right into the ICS architecture, allowing for packet captures that the passive tools need to do their work.

This is what I call design for security.

About the Author

Pascal Ackerman is a seasoned industrial security professional with a degree in electrical engineering and with 18 years of experience in industrial network design and support, information and network security, risk assessments, pentesting, threat hunting and forensics. After almost two decades of hands-on, in-the-field and consulting experience, he joined ThreatGEN in 2019 and is currently employed as Principal Analyst in Industrial Threat Intelligence & Forensics. His passion lays in analyzing new and existing threats to ICS environments and he fights cyber adversaries both from his home base and while traveling the world with his family as a digital nomad.